Matrix Vector Products As Linear Transformations Linear Algebra

Transforms In Linear Algebra Solution. first, we have just seen that t(→v) = proj→u(→v) is linear. therefore by theorem 5.2.1, we can find a matrix a such that t(→x) = a→x. the columns of the matrix for t are defined above as t(→ei). it follows that t(→ei) = proj→u(→ei) gives the ith column of the desired matrix. Showing how any linear transformation can be represented as a matrix vector productwatch the next lesson: khanacademy.org math linear algebra mat.

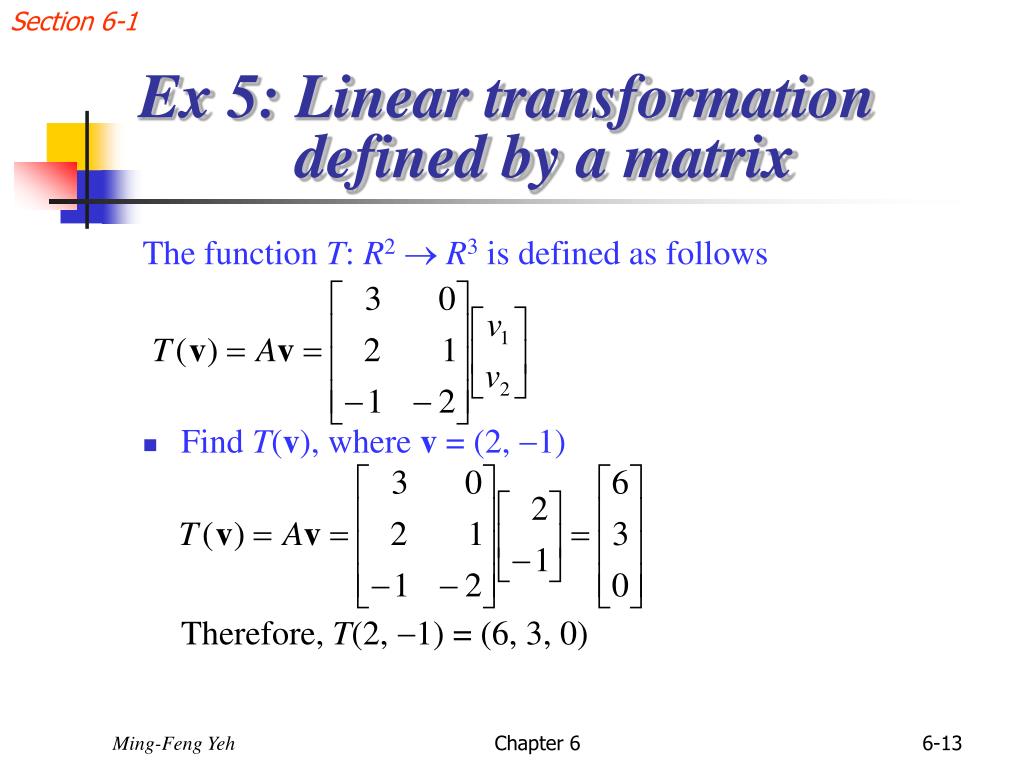

Linear Transformations And Matrices Master Data Science Definition 2.4.1. the product a x of an m × n matrix. a = [a 1 a 2 … a n] with a vector. x = [x 1 x 2 ⋮ x n] ∈ r n. is defined as. a x = x 1 a 1 x 2 a 2 … x n a n. so: a x is the linear combination of the columns of the matrix a with the entries of the vector x as coefficients. The product of a matrix a by a vector x will be the linear combination of the columns of a using the components of x as weights. if a is an m × n matrix, then x must be an n dimensional vector, and the product ax will be an m dimensional vector. if. a = [v1 v2 … vn], x = [c1 c2 ⋮ cn], then. ax = c1v1 c2v2 …cnvn. Theorem 5.1.1: matrix transformations are linear transformations. let t: rn ↦ rm be a transformation defined by t(→x) = a→x. then t is a linear transformation. it turns out that every linear transformation can be expressed as a matrix transformation, and thus linear transformations are exactly the same as matrix transformations. And now, let us return to matrix transformations. 3.1.4. standard matrix for a linear transformation# we have seen that every matrix transformation is a linear transformation. in this subsection we will show that conversely every linear transformation \(t:\mathbb{r}^n \to \mathbb{r}^m\) can be represented by a matrix transformation.

Comments are closed.